请注意,本文编写于 315 天前,最后修改于 207 天前,其中某些信息可能已经过时。

目录

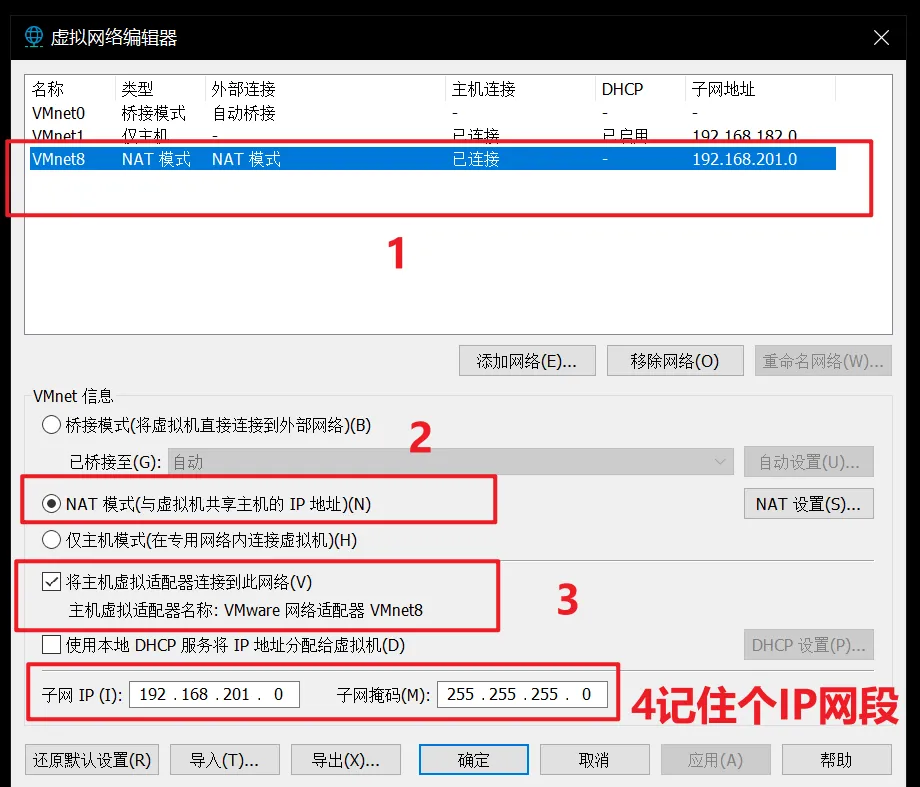

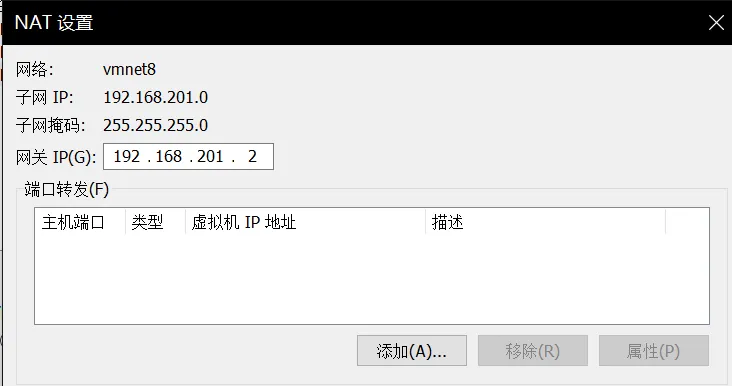

一、虚拟机网络配置

二、环境准备

- 关闭防火墙

临时关闭防火墙

jssystemctl stop firewalld

永久关闭防火墙

jssystemctl disable firewalld

- 关闭内存交换分区

jsswapoff -a

jsvim /etc/fstab # 注释 swap 行

3.关闭selinux

jssetenforce 0

4.同步服务器时间

jsyum install ntpdate -t

ntpdate -u ntp.aliyun.com

timedatectl set-timezone Asia/Shanghai

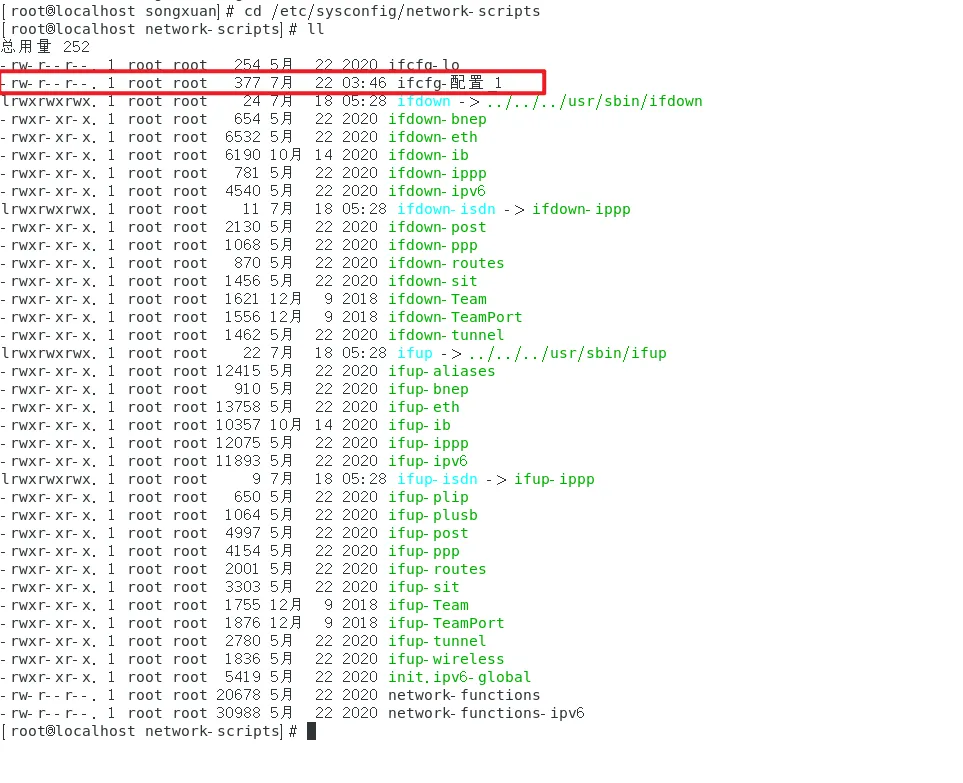

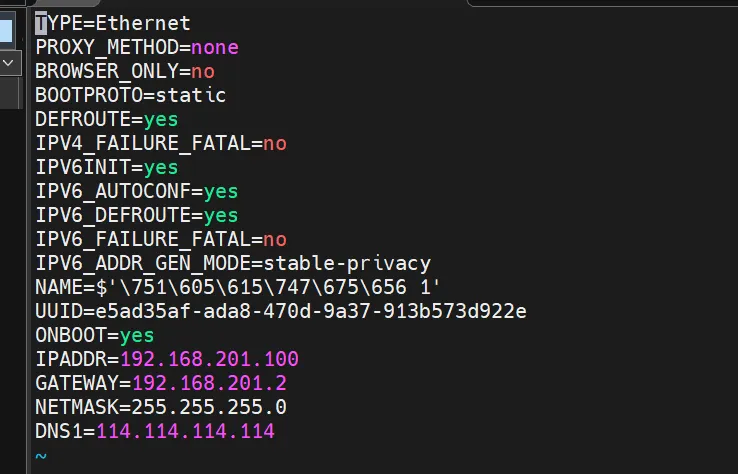

三、配置虚拟机静态IP

jscd /etc/sysconfig/network-scripts

- 修改网络配置

jsvi ifcfg-配置_1

2.修改完成后重启网卡

jsservice network restart

四、配置好三台虚拟机

- IP为:192.168.201.100、192.168.201.101、192.168.201.102、192.168.201.103

- 修改主机名加入host中

jshostnamectl set-hostname k8s-Master01

hostnamectl set-hostname k8s-node01

hostnamectl set-hostname k8s-node02

jscat >> /etc/hosts <<EOF

192.168.201.100 k8s-Master01

192.168.201.101 k8s-node01

192.168.201.102 k8s-node02

192.168.201.104 k8s-node03

EOF

- 由于centos7原来的yum源使用不了,需要修改yum源 先进行备份yum源文件

jscd /etc/yum.repos.d/

mkdir backup

mv *.repo backup/

- 配置阿里云镜像源

jswget -O /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

- 重新建立缓存

jsyum clean all yum makecache

五、安装containerd

- 安装 yum-config-manager 相关依赖

jsyum install -y yum-utils device-mapper-persistent-data lvm2

- 添加 containerd yum 源

jsyum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

- 安装 containerd

jsyum install -y containerd.io cri-tools

- 配置 containerd

jscat > /etc/containerd/config.toml <<EOF

disabled_plugins = ["restart"]

[plugins.linux]

shim_debug = true

[plugins.cri.registry.mirrors."docker.io"]

endpoint = ["https://frz7i079.mirror.aliyuncs.com"]

[plugins.cri]

sandbox_image = "registry.aliyuncs.com/google_containers/pause:3.2"

EOF

- 启动 containerd 服务 并 开机配置自启动

jssystemctl enable containerd && systemctl start containerd && systemctl status containerd

- 配置 containerd 配置

jscat > /etc/modules-load.d/containerd.conf <<EOF

overlay

br_netfilter

EOF

- 配置 k8s 网络配置

jscat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

- 加载 overlay br_netfilter 模块

jsmodprobe overlay modprobe br_netfilter

- 查看当前配置是否生效

jssysctl -p /etc/sysctl.d/k8s.conf

六、安装K8S集群

- 添加源

jsyum repolist

jscat <<EOF > kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

jsmv kubernetes.repo /etc/yum.repos.d

- 安装 k8s

js# 安装最新版本

yum install -y kubelet kubeadm kubectl

# 指定版本安装

yum install -y kubelet-1.26.0 kubectl-1.26.0 kubeadm-1.26.0

# 启动 kubelet

systemctl enable kubelet && sudo systemctl start kubelet && sudo systemctl status kubelet

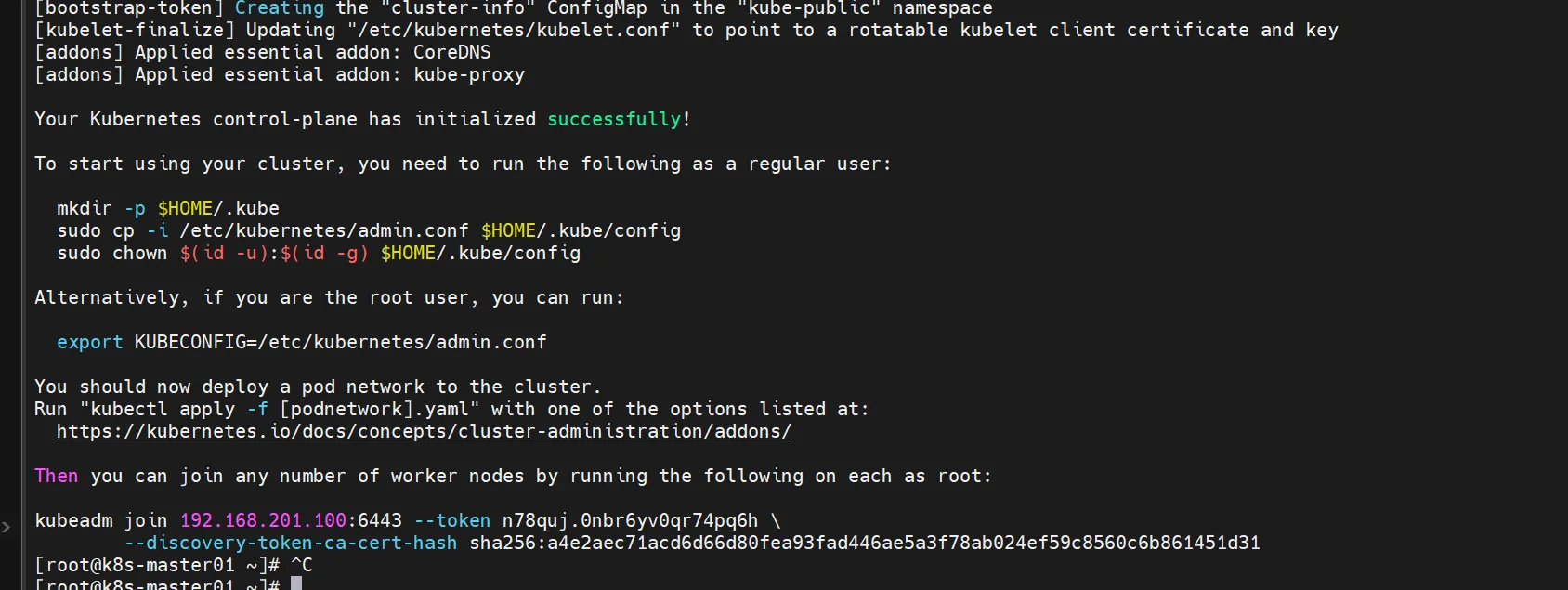

- 初始化集群,注意!!!只需要控制节点执行(master节点)。

注意

注意: 初始化 k8s 集群仅仅需要再在 master 节点进行集群初始化!

jskubeadm init \

--apiserver-advertise-address=192.168.201.100 \

--pod-network-cidr=10.244.0.0/16 \

--image-repository registry.aliyuncs.com/google_containers \

--cri-socket=unix:///var/run/containerd/containerd.sock

- 初始化完成后,使用工作节点加入集群

jsmkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

- 使用截图的秘钥加入K8S集群

jskubeadm join 192.168.201.100:6443 --token n78quj.0nbr6yv0qr74pq6h \

--discovery-token-ca-cert-hash sha256:a4e2aec71acd6d66d80fea93fad446ae5a3f78ab024ef59c8560c6b861451d31

相关信息

如加入配置失败,可以清理配置文件后重新加入 执行重置命令

kubeadm reset --force

删除残留文件

rm -f /etc/kubernetes/kubelet.conf

rm -f /etc/kubernetes/bootstrap-kubelet.conf

rm -f /etc/kubernetes/pki/ca.crt

注

虚拟重启时,有个工作节点,一直有问题起不来

清理残留文件

sudo rm -f /etc/kubernetes/pki/ca.crt

sudo rm -rf /etc/kubernetes/manifests/

重新执行加入集群命令

kubeadm join 192.168.201.100:6443 --token 3glsa8.8q59hc078gz6382m

--discovery-token-ca-cert-hash sha256:729e927fa4450c6c84b4912a15e505a4f3204c7ca625913e0e20945e0a5a36d1

命令可以去主节点生成,生成命令:

kubeadm token create --print-join-command

- 配置集群网络

jsvi kube-flannel.yaml

js---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

k8s-app: flannel

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: flannel

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: flannel

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

k8s-app: flannel

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"EnableNFTables": false,

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

k8s-app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: ghcr.io/flannel-io/flannel-cni-plugin:v1.7.1-flannel1

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: ghcr.io/flannel-io/flannel:v0.27.2

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: ghcr.io/flannel-io/flannel:v0.27.2

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

- name: CONT_WHEN_CACHE_NOT_READY

value: "false"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

本文作者:松轩(^U^)

本文链接:

版权声明:本博客所有文章除特别声明外,均采用 BY-NC-SA 许可协议。转载请注明出处!

目录